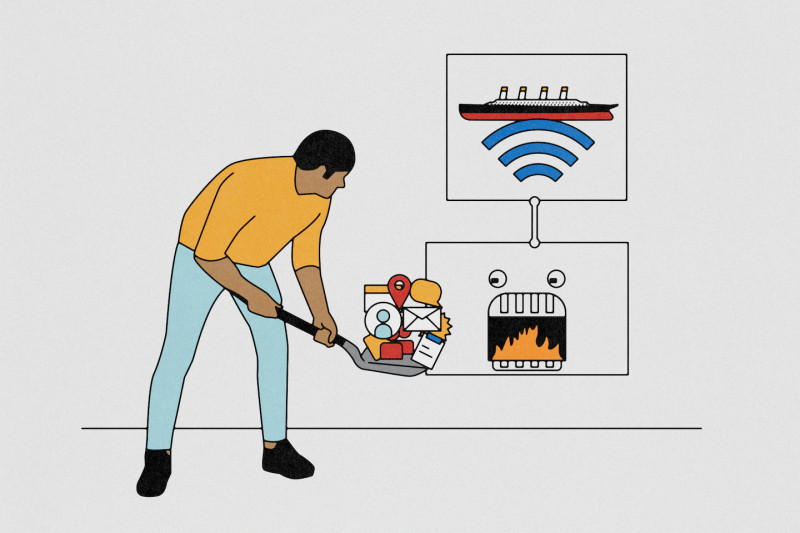

A modern digital panopticon has silently descended upon Meta’s US employees, silently observing every keystroke, mouse movement, and screen capture. This new regime, cloaked under the guise of technological advancement, marks a significant shift in the company's AI initiatives.

Meta's Employee Surveillance: The Digital Panopticon

Meta has discreetly installed new tracking software on the computers of its US-based employees, meticulously recording their every digital interaction. This data, encompassing all keystrokes, mouse movements, and periodic screenshots, is fed into the company's AI models for training and development. The initiative, known as the Model Capability Initiative (MCI), is part of a broader strategy called the Agent Transformation Accelerator (ATA). These agents are designed to autonomously perform various tasks, enhancing the company's ability to compete in the autonomous AI market.

The Ethics of Employee Surveillance

Meta assures employees that the data collected will not be used for performance evaluations or any other purposes beyond AI training. However, the ethical implications of such a practice remain a contentious issue. The invasion of privacy, even in the name of technological progress, raises significant concerns about the boundaries between employee monitoring and surveillance.

Employees have expressed a mix of apprehension and curiosity. While some view it as an intrusion, others see it as an opportunity to be part of cutting-edge AI development, potentially enhancing their job security. The company has pledged transparency, yet the psychological impact of constant monitoring cannot be understated.

Meta's Competitive Edge: Data-Driven AI Development

The data collected from employees will be used to train AI agents capable of performing a range of tasks autonomously. This initiative positions Meta to compete with industry leaders like Anthropic and OpenAI, both of whom have made significant strides in the autonomous AI agent market. Meta's approach leverages its vast internal data pool, which includes data from its social media platforms and now, employee interactions.

By training AI agents to perform tasks autonomously, Meta aims to automate routine workflows and enhance productivity. The data collected from employee interactions will be invaluable in refining these agents, making them more efficient and effective in their roles. This approach not only accelerates the development of AI but also ensures that the models are trained on high-quality, real-world data.

To further illustrate the implications of this initiative, consider the broader landscape of AI development. Companies like Anthropic and OpenAI have built their AI models on vast datasets sourced from the internet. Meta's approach, however, is more controlled and targeted, focusing on real-world interactions within a specific environment. This difference in data sourcing could potentially lead to more nuanced and context-aware AI agents, capable of performing tasks with a deeper understanding of user behavior and workplace dynamics.

Despite the potential benefits, the ethical and privacy concerns surrounding this initiative remain significant. As AI continues to evolve, the lines between innovation and intrusion become increasingly blurred. Meta’s decision to use employee data for AI training underscores the complex interplay between technological advancement and employee rights. This practice raises critical questions about the boundaries of corporate surveillance and the ethical implications of data harvesting in the workplace.

In the broader context of AI development, this initiative by Meta could set a precedent for other tech giants looking to leverage internal data for AI training. The success of this program will likely influence how other companies approach data collection and AI development, potentially leading to a new era of data-driven innovation. However, the ethical considerations and potential backlash from employees and regulators will also shape the future trajectory of such practices.

Is this the dawn of a new era in AI development, or the beginning of a more sinister form of corporate surveillance?